Hey friends! I’m Akash, an early stage software/fintech investor. I write about startup strategy to help founders & operators on their company-building journeys.

You can always reach me at akash@earlybird.com to exchange notes and share feedback.

Current subscribers: 3,820

NFX defines protocol network effects as:

A Protocol Network Effect arises when a communications or computational standard is declared and all nodes and node creators can plug into the network using that protocol.

For those of you who have seen The Big Short, remember that scene when Mark Baum and Vinny Daniel visit Standard & Poors and are left incredulous by the agent’s explanation for why they’ve been giving triple-A ratings to mortgage-backed securities?

'If we don’t give it to them they’ll go to Moody’s.’

Moody’s, together with Standard & Poors and Fitch, represent 95% of the global credit ratings market. Moody’s is a $5.5bn revenue business operating at 35% operating margins and a market cap of $73bn as at time of writing.

The ratings business works as follows, as Brian Yacktman explained:

To give you an idea of the way it works, they receive transactional revenue from the first time that they rate the bond, and those are paid by the debt issuer. And then they will receive annual debt monitoring fees, surveillance revenue, and it's from continuously monitoring the bond after issuance. So this is where you'll see upgrades, downgrades or maintaining initial ratings.

At various points in the twentieth century, regulators helped solidify the rating agency market structure by imposing capital requirements and rules on which agencies were able to issue ratings for investment grade bonds.

And now they have this global language being used in the capital markets, and nothing they could do could disrupt it. And so now today, virtually all fixed income investors measure risk using these three companies' conventions or protocols.

So we call this a protocol network effect.

The credit rating agencies play an integral role in the smooth functioning of the capital markets, and the express purpose of establishing a common understanding of the riskiness of a security inherently favours concentration around a few languages. Market share consolidation was inevitable.

And so languages tend to coalesce around two, three or four major languages. Thinking in those terms, I mean, imagine trying to disrupt English, Mandarin, Spanish. And so the bottom line is these companies have become the global language of the bond business.

And we call it a protocol network effect, we consider it one of the most difficult competitive advantages to disrupt.

There’s a fundamental contrast that with credit bureaus, which are local by nature of the underlying borrower who is a consumer borrowing from a local financial institution, compared to multi-national corporations issuing bonds to the global capital markets.

In the U.S., it's dominated basically by Equifax, Experian and TransUnion. But if you look across the world, there are many country-specific winners in that space.

And that's because they're collecting all this proprietary credit data on individuals and then they're selling that information to local banks to make better loans. But most banks then focus their efforts in the domestic market, both because the local knowledge is valuable there in lending and also because the governments have that implicit/explicit bank guarantees that result in all sorts of regulations that dissuades overseas growth.

Compare that, though, what I like way better with the case of Moody's is it's a global network effect, not just a local network effect. And the more global that ecosystem is, the more difficult it is to disrupt. And so then once those winners emerge, they basically just continue to plod along.

Protocol Network Effects in AI

Vanta’s founding insight was discovering how SOC 2 compliance helped Figma unlock the enterprise - at the time, in 2017, few startups had SOC 2 compliance certificates.

We built the initial product around it because it seemed like the closest thing to an industry standard.

Christina Cacioppo

The Intel x86 chip architecture was similarly anointed with industry standard recognition through the PC wave and the early onset of hyperscalers building out their data centers.

But that has been dominated by Intel for 40 years, 30 years. If you think of servers in the data center, it's an Intel x86 chip. It's like a hundred percent.

I've spent many years optimizing my software to run on an Intel x86 CPU. Suddenly if I'm not getting that performance improvement from an Intel CPU, it's fairly easy for me to switch to AMD. It's hard though, for anyone else to get into that market because all of this is run on x86 instruction sets.

These are just a couple of examples, but if we inspect the present moment in AI, it’s worth reflecting on the emergence of protocol network effects.

‘Just as SOC-2 became the gold standard of compliance in the first SaaS wave, the ecosystem will converge around security requirements that enable our AI future.’

‘In the same way SOC-2 compliance is a must for any B2B product, I wonder if a separate standard will be coined and made a requirement for third-party LLM native applications.’

Scale’s operating at a $750m annual revenue run rate and has made significant strides in cementing itself as a key player in the LLM value chain by partnering with OpenAI for finetuning.

In their vision for Test and Evaluation, Scale outlined some of their core beliefs about the future of model certification.

Model Certification: Once a model is nearing a new major release, model certification consists of a battery of standard tests conducted to ensure minimum satisfactory achievement of some pre-established performance standard. This can be a score against an academic benchmark, an industry-specific test, or a separate regulatory-dictated standard.

There should consequently exist a wide variety of new model certification regulatory standards, industry by industry, which government helps craft in order to ensure the safety and efficacy of model use by enterprises and the public.

Certain enterprises will establish their own internal performance standards, but above and beyond that there need to exist standards on the models’ use enforced by regulatory bodies in the relevant domains, as discussed above. The achievement of these standards should be adjudicated on a regular cadence by a third party organization, and be recognized by the bestowment and maintenance of official certifications, as is the case for certain information security certifications today.

Scale are making the case for a government regulatory agency to become the arbiter of model certification, whilst adding certification-readiness to its product suite.

Will AI follow the global capital markets in coalescing around a common language of ratings for accuracy/latency/other dimensions?

Will companies like Scale go further than Vanta and become the protocol owner rather than a participant? Or will federal governments ascribe protocol ownership to regulatory bodies?

I don’t know.

Brand in AI

Protocol ownership of model certification by regulators would be an example of a cornered resource in Hamilton Helmer’s Seven Powers.

Brand is undoubtedly another key power in the research lab wars unfolding today. As Michael Dempsey wrote on

:‘It’s important to note that brand moats are not built quickly, but instead are a result of many intentional steps by companies and the people that comprise them.’

In AI we’ve seen firms like Hugging Face utilize their Transformers library to then generate a network effect to then lift up their brand as the beacon for OSAI (originally starting as the core place for those interested in NLP). HF has become the de-facto home for open-source which has allowed them to accumulate material talent and capital at what some would imagine is ahead of traditional metrics.

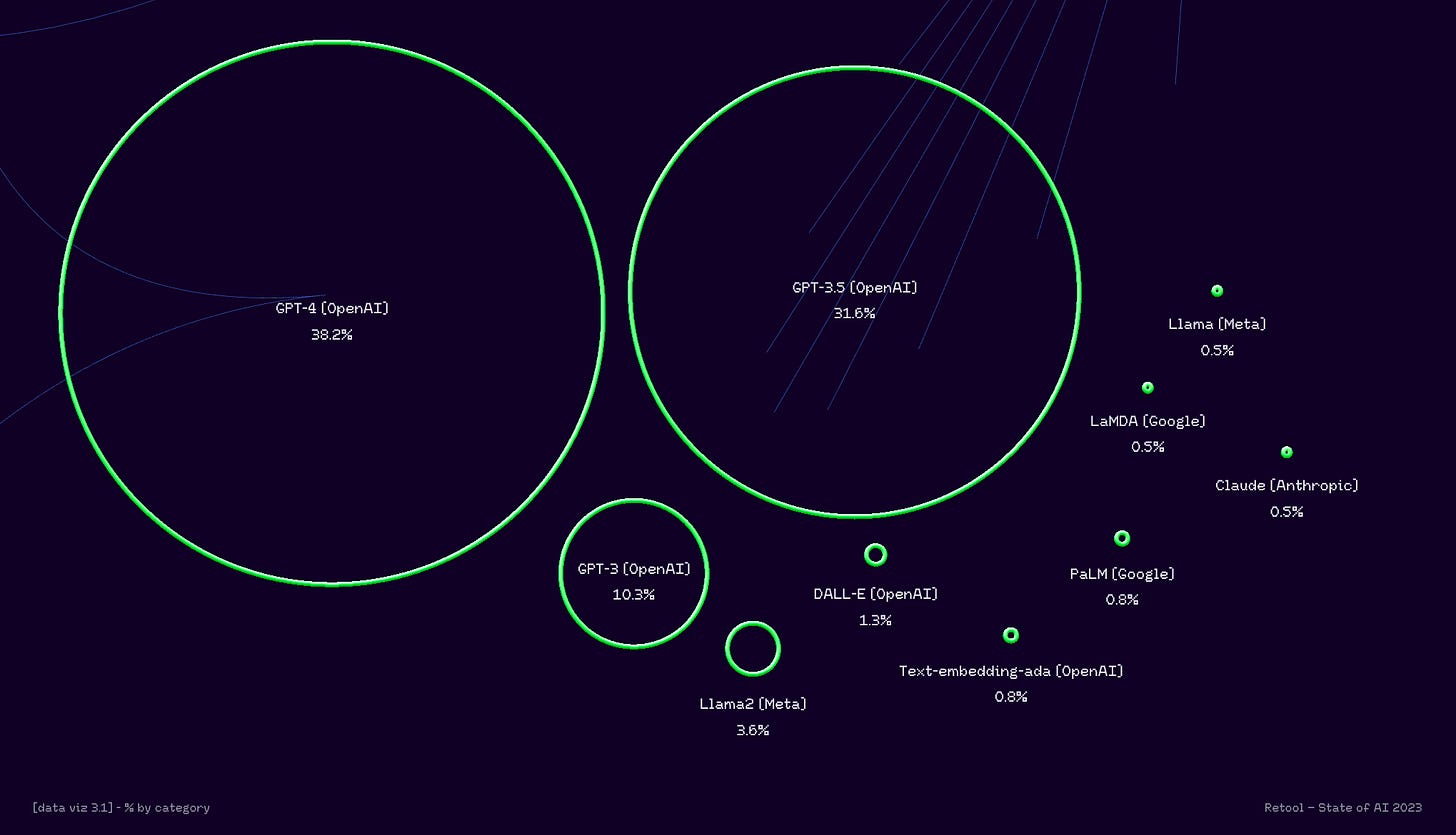

We’ve also seen the synergy between network effects and brand moats being framed as directly correlated to performance increases across the fundraising pitches for all of the large AI Labs. Most of these financing decks claim that model size and data scale paired with RLHF, will create breakaway model performance that cannot be surpassed.

The brand moats that companies build will likely only increase in importance as capital markets continue to king-make a range of startups in a given space and talent begins to more heavily consolidate amidst fast failures from the AI boom cycle of 2022-2024. The leading companies will be large beneficiaries as the Talent → Performance → Brand → Capital flywheel spins faster and faster.

As of February 2024, OpenAI’s intentionality about developing a brand has made it a: talent vortex > that has continued its leadership of SOTA models > further strengthened the brand > aided fundraising.

It may not be a protocol, but as far as brand goes it doesn’t get much better than OpenAI’s execution of a durable moat.

Charts of the week

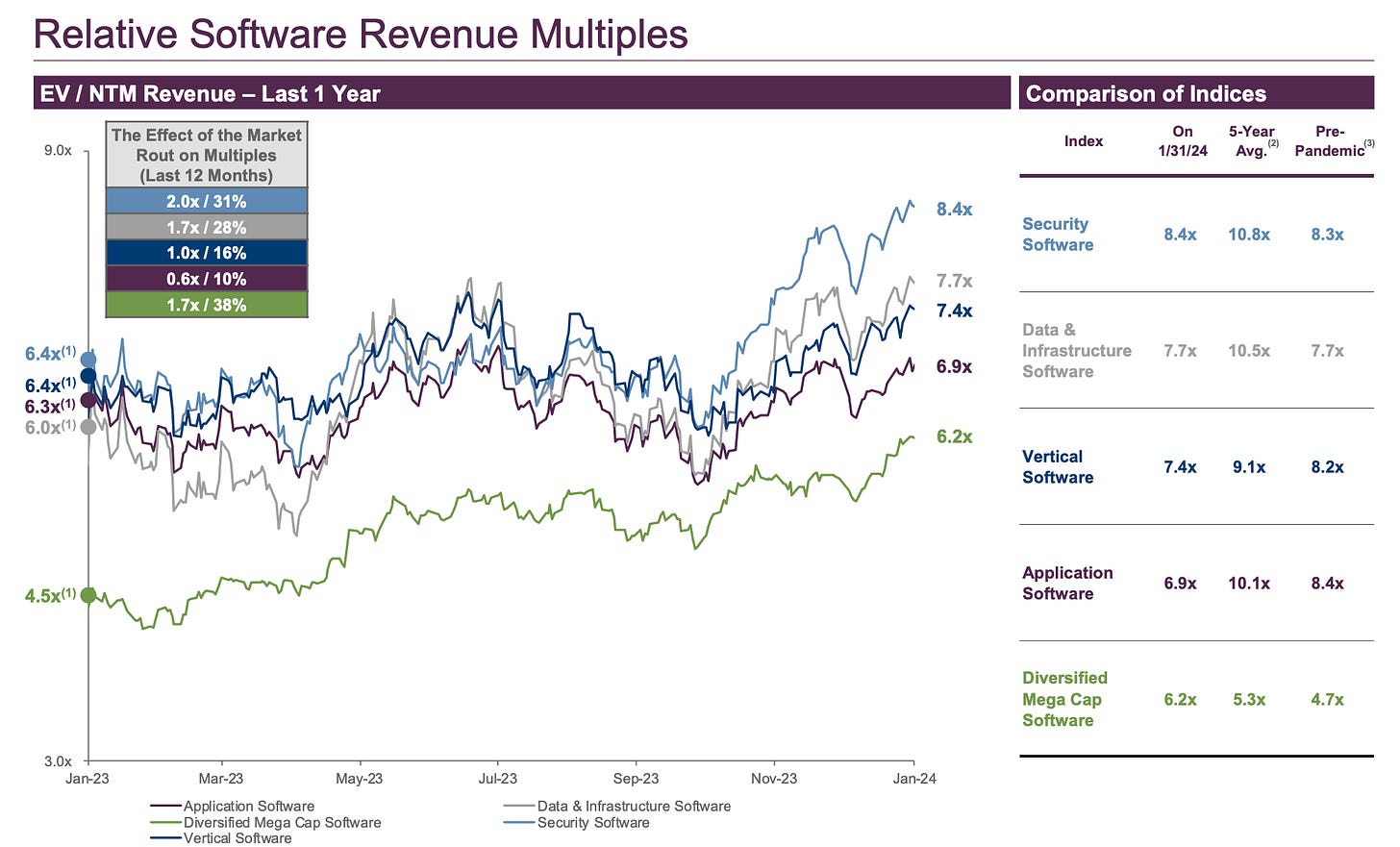

Application software trading below pre-pandemic multiples

Growth endurance under severe strain

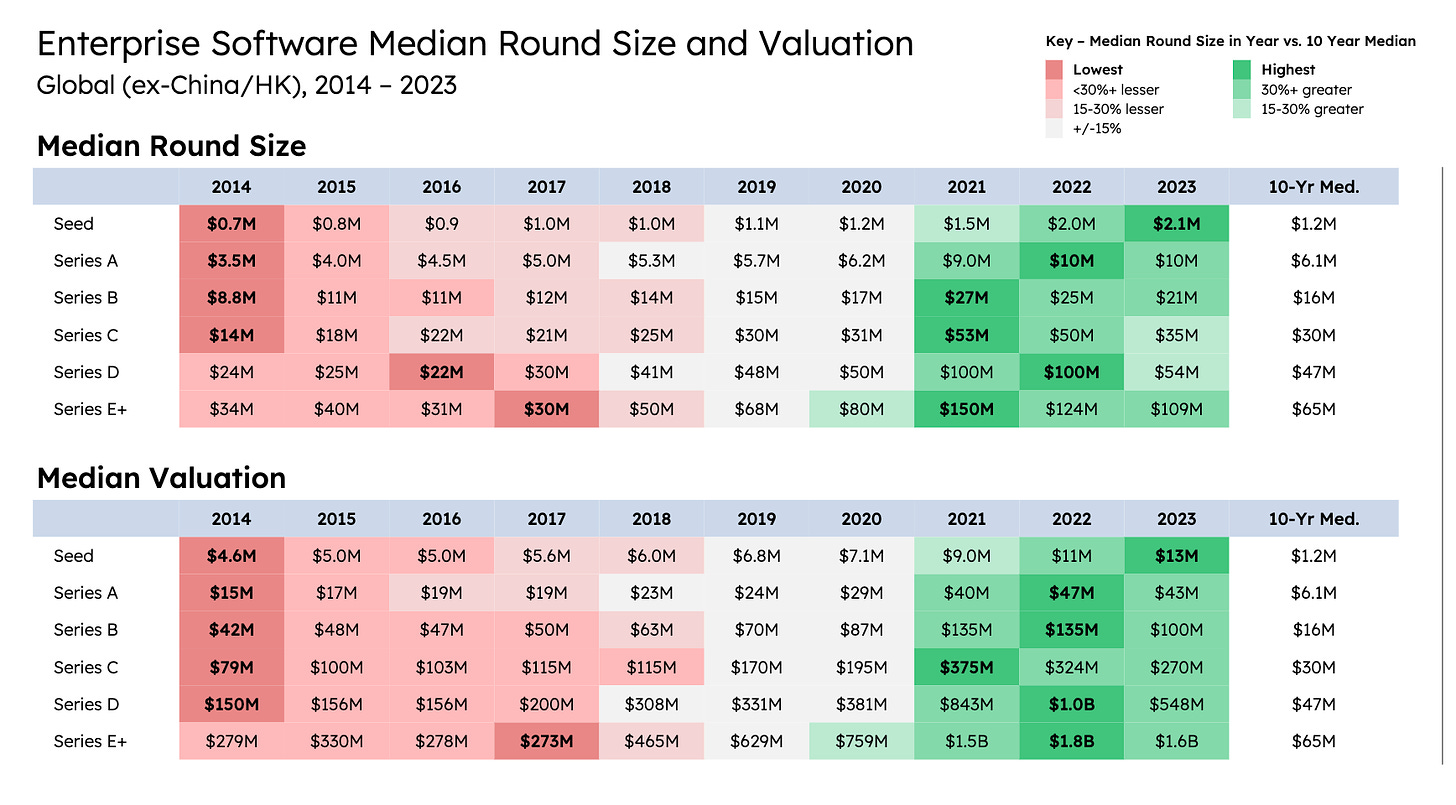

Dry powder moving earlier has pushed seed to all-time high valuations

Reading List

How to Maximize Valuation | Public Company "Rule of X" Performance

A few thoughts on intensity Tyler Hogge

Intel Humbling Ben Thompson

State of SaaS Capital Markets Sapphire Ventures

Quote of the week

‘On Pearl Harbor Day 1978, the Microsoft staff convened on the second floor of a shopping center for a group portrait. Despite a rare and raging snowstorm in Albuquerque that day, 11 of the 13 employees made it. When I look at that iconic photograph today, I see a group of young people excited about their future. Back in Boston, Bill and I had been searching for the next big thing. Little knowing that we'd find it in this remote city in the Southwest. Now we had a real team behind us and a firm sense of direction. In four years, we had come a long way.

If you look closely at that photo, you'll see just about everyone's smiling. That captures our spirit back then. When I talk about the early days at Microsoft, it's hard to explain to people how much fun it was even with the absurd hours and arguments we were having the times of our lives.’

Thank you for reading. If you liked it, share it with your friends, colleagues, and anyone that wants to get smarter on startup strategy. Subscribe below and find me on LinkedIn or Twitter.